Research

My research sits at the intersection of control, optimization, and machine learning. I develop principled decision-making frameworks for Physical AI systems, aiming to bridge the gap between rigorous theoretical guarantees and high-performance adaptive intelligence. By addressing the fundamental trade-offs between efficiency, safety, and generalizability, my work seeks to empower autonomous systems—including robotics, autonomous vehicles, and large-scale mobility networks—to operate reliably in complex, uncertain, and dynamic environments.

Research Directions

Reinforcement Learning

|

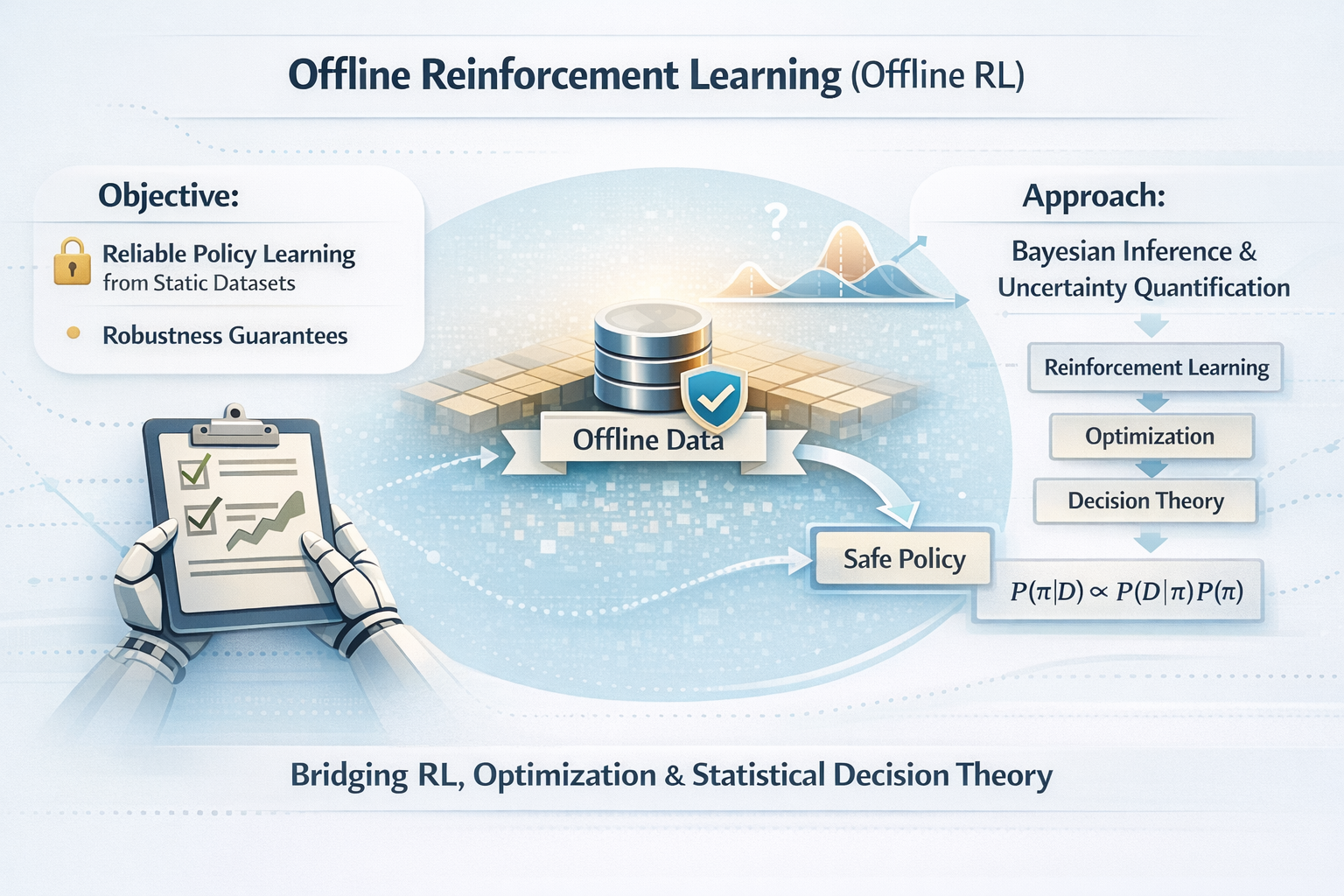

This line of research explores the theoretical and algorithmic foundations of offline and safe multi-agent reinforcement learning (MARL).

|

World Foundation Models

|

I investigate the synergy between world models and RL for scalable decision-making under uncertainty.

|

Dynamic Programming & Optimal Control

|

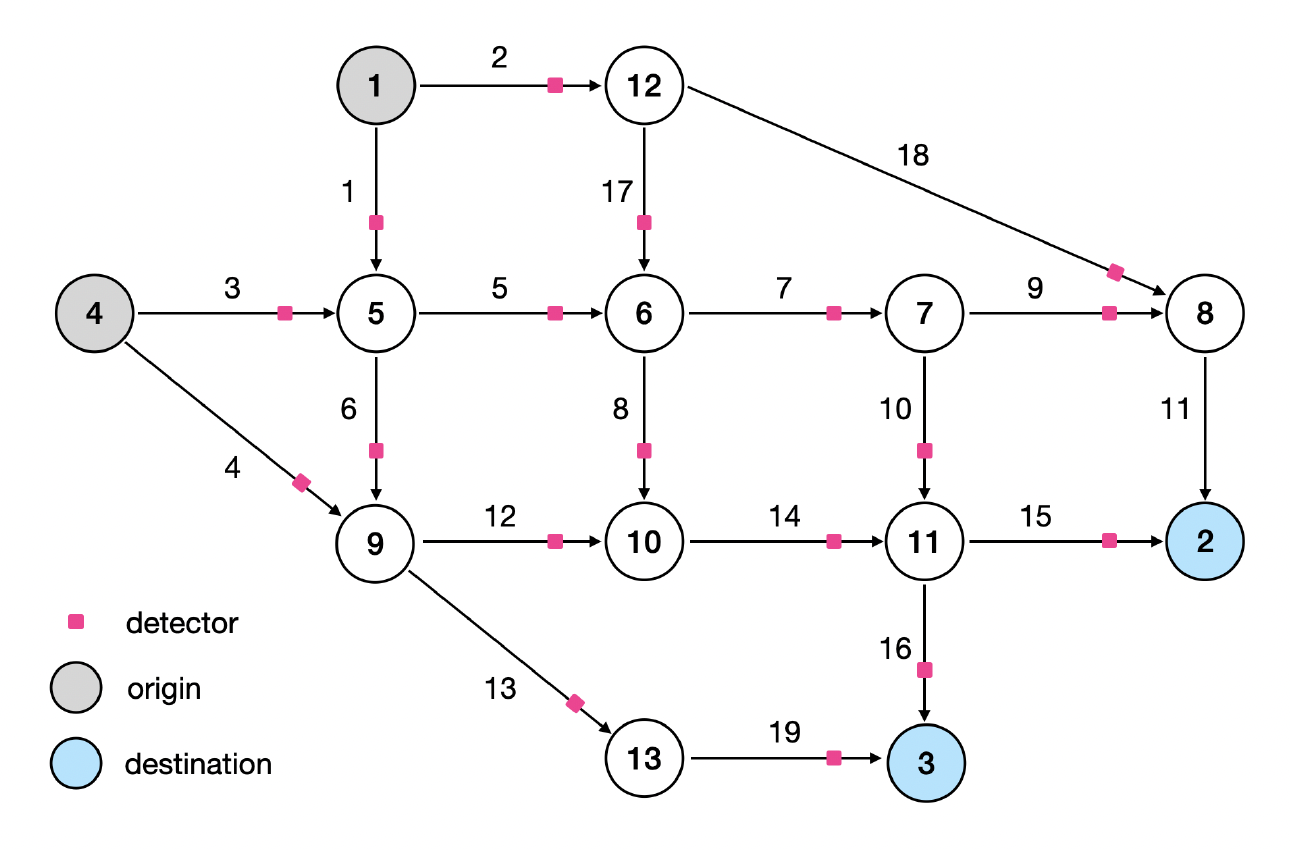

I advance the analytical foundations of dynamic programming and network optimization for multi-agent systems.

|

Bandit Algorithms & Online Learning

|

This area focuses on adaptive decision-making within contextual bandits and streaming data environments.

|